Object-oriented languages have taught us to think in terms of objects (or nouns) and Java is yet another example of the incarnation of the noun land. When was the last time you saw an elegant piece of Swing code ? Steve Yegge is merciless when he

rants about it .. and rightly so ..

Building UIs in Swing is this huge, festering gob of object instantiations and method calls. It's OOP at its absolute worst.

There are ways of making OOP smart, we have been talking about

fluent interfaces, OO design patterns, AOP and higher level of abstractions similar to those of DSLs. But the real word is *productivity* and the language needs to make your user elegantly productive. Unfortunately in Java, we often find people generating reams of boilerplates (aka getters and setters) that look like pureplay copy-paste stuff. Java abstractions thrive on the evil loop of the 3 C's

create-construct-call along with liberal litterings of getters and setters. You create a class, declare 5 read-write attributes and you have a pageful of code before you start throwing in a single piece of actual functionality. Object orientation procrastinates public attributes, restricts visibility of implementation details, but never prevents the language from providing elegant constructs to handle boilerplates. Ruby does this, and does it with elan.

Java is not OrthogonalPaul Graham in

On Lisp defines orthogonality of a language as follows :

An orthogonal language is one inwhich you can express a lot by combining a small number of operators in a lot of different ways.

He goes on to explain how the

complement function in Lisp has got rid of half of the *if_not* funtions from the pairs like [

remove-if,

remove-if-not], [

subst-if,

subst-if-not] etc. Similarly in Ruby we can have the following orthogonal usage of the "*" operator across data types :

"Seconds/day: #{24*60*60}" will give Seconds/day: 86400

"#{'Ho! '*3}Merry Christmas!" will give Ho! Ho! Ho! Merry Christmas!

C++ supports operator overloading, which is also a minimalistic way to extend your operator usage.

In order to bring some amount of orthogonality in Java we have lots of frameworks and libraries. This is yet another problem of dealing with an impoverished language - you have a proliferation of libraries and frameworks which add unnecessary layers in your codebase and tend to collapse under their weight.

Consider the following code in Java to find a matching sub-collection based on a predicate :

class Song {

private String name;

...

...

}

// ...

// ...

Collection<Song> songs = new ArrayList<Song>();

// ...

// populate songs

// ...

String title = ...;

Collection<Song> sub = new ArrayList<Song>();

for(Song song : songs) {

if (song.getName().equals(title)) {

sub.add(song);

}

}

The Jakarta Commons Collections framework adds orthogonality by defining abstractions like

Predicate,

Closure,

Transformer etc., along with lots of helper methods like

find(),

forAllDo(),

select() that operate on them, which helps user do away with boilerplate iterators and for-loops. For the above example, the equivalent one will be :

Collection sub = CollectionUtils.transformedCollection(songs,

TransformerUtils.invokerTransformer("getName"));

CollectionUtils.select(sub,

PredicateUtils.equalPredicate(title));

Yuck !! We have got rid of the for-loop, but at the expense of ugly ugly syntax, loads of statics and type-unsafety, for which we take pride in Java. Of course, in Ruby we can do this with much more elegance and lesser code :

@songs.find {|song| title == song.name }

and this same syntax and structure will work for all sorts of collections and arrays which can be iterated. This is orthogonality.

Another classic example of non-orthogonality in Java is the treatment of arrays as compared to other collections. You can initialize an array as :

String[] animals = new String[] {"elephant", "tiger", "cat", "dog"};

while for Collections you have to fall back to the ugliness of explicit method calls :

Collection<String> animals = new ArrayList<String>();

animals.add("elephant");

animals.add("tiger");

animals.add("cat");

animals.add("dog");

Besides arrays have always been a second class citizen in the Java OO land - they support covariant subtyping (which is unsafe, hence all runtime checks have to be done), cannot be subclassed and are not extensible unlike other collection classes. A classic example of non-orthogonality.

Initialization syntax ugliness and lack of literals syntax support has been one of the major failings of Java - Steve Yegge has

documented it right to its last bit.

Java and ExtensibilityBeing an OO language, Java supports extension of classes through inheritance. But once you define a class, there is no scope of extensibility at runtime - you cannot define additional methods or properties. AOP has been in style, of late, and has proved quite effective as an extension tool for Java abstractions. But, once again it is NOT part of the language and hence does not go to enrich the Java language semantics. There is no meta-programming support which can make Java friendlier for DSL adoption. Look at this excellent example from this recent

blogpost :

Creating some test data for building a tree, the Java way :

Tree a = new Tree("a");

Tree b = new Tree("b");

Tree c = new Tree("c");

a.addChild(b);

a.addChild(c);

Tree d = new Tree("d");

Tree e = new Tree("e");

b.addChild(d);

b.addchild(e);

Tree f = new Tree("f");

Tree g = new Tree("g");

Tree h = new Tree("h");

c.addChild(f);

c.addChild(g);

c.addChild(h);

and the Ruby way :

tree = a {

b { d e }

c { f g h }

}

It is really this simple - of course you have the meta-programming engine backing you for creating this DSL. What this implies is that, with Ruby you can extend the language to define your own DSL and make it usable for your specific problem at hand.

Java Needs More Syntactic SugarsAny Turing complete programming language has the ability to allow programmers implement similar functionalities. Java is a Turing complete language, but still does not boost enough programmer's productivity. Brevity of the language is an important feature and modern day languages like Ruby and Scala offer a lot in that respect. Syntactic sugars are just as important in making programmers feel concise about the implementation. Over the last year or so, we have seen lots of syntactic sugars being added to C# in the forms of

Anomymous Methods, Lambdas, Expression Trees and Extension Methods. I think Java is lagging behind a lot in this respect. The

smart for-loop is an example in the right direction. But Sun will do the Java community a world of good in offering other syntactic sugars like automatic accessors, closures and lambdas.

Proliferation of LibrariesIn order to combat Java's shortcomings at complexity management, over the last five years or so, we have seen the proliferation of a large number of libraries and frameworks, that claim to improve programmer's productivity. I gave an example above, which proves that there is no substitute for language elegance. These so called productivity enhancing tools are added layers on top of the language core and have been mostly delivered as generic ones which solve generic problems. There you are .. a definite case of

Frameworkitis. Boy, I need to solve this particular problem - why should I incur the overhead of all the generic implementations. Think DSL, my language should allow me to carve out a domain specific solution using a domain specific language. This is where Paul Graham positions Lisp as a

programmable programming language. I am not telling all Java libraries are crap, believe me, some of them really rocks,

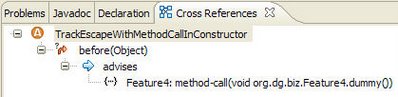

java.util.concurrent is one of the most significant value additions to Java ever and AOP is the closest approximation to meta-programming in Java. Still I feel many of them would not have been there, had Java been more extensible.

Is it Really Static Typing ?I have been thinking really hard about this issue of lack of programmer productivity with Java - is static typing the main issue ? Or the lack of meta-programming features and the ability that languages like Ruby and Lisp offer to treat code and data interchangeably. I think it is a combination of both the features - besides Java does not support first class functions, it doesn't have Closures as yet and does not have some of the other productivity tools like parallel assignment, multiple return values, user-defined operators, continuations etc. that make a programmer happy. Look at Scala today - it definitely has all of them, and also supports static typing as well.

In one of the enterprise Java projects that we are executing, the Maven repository has reams of third party jars (mostly open source) that claim to do a better job of complexity management. I know Ruby is not enterprise ready, Lisp never claimed to deliver performance in a typical enterprise business application, Java does the best under the current circumstances. And the strongest point of Java is the JVM, possibly the best under the Sun. Initiatives like Rhino integration, JRuby and Jython are definitely in the right direction - we all would love to see the JVM evolving as a friendly nest of the dynamic languages. The other day, I was listening to the Gilad Bracha session on

"Dynamically Typed Languages on the Java Platform" delivered in Lang .NET 2006. He discussed about

invokedynamic bytecode and

hotswapping to be implemented in the near future on the JVM. Possibly this is the future of the Java computing platform - it's the JVM that holds more promise for the future than the core Java programming language.